With Line of Best Fit at the forefront, we’re about to dive into a fascinating world of data science where mathematicians and statisticians have paved the way for understanding trends and patterns. From historical development to real-world applications, we’ll explore the concept, its significance, and its evolution.

Throughout this article, we’ll delve into the mathematical framework, methods for determining the line of best fit, and its role in data visualization and communication. We’ll also touch on its application in predictive modeling and forecasting, as well as the challenges and limitations of the line of best fit.

The Concept of Line of Best Fit and Its Evolution in Data Science

The concept of line of best fit, also known as linear regression, has a rich history that dates back to the 18th century. Initially, it was used in the field of economics to understand the relationship between variables, and over time, it evolved to become a fundamental tool in data science.

The line of best fit is a line that best represents the relationship between two variables, minimizing the sum of the squared errors between the predicted and actual values. This concept is based on the idea of least squares, which was first introduced by German mathematician Carl Friedrich Gauss in the early 19th century.

Gauss’s work laid the foundation for the development of linear regression, and it was further refined by other mathematicians and statisticians. For instance, Sir Ronald Fisher, a renowned statistician, introduced the concept of multiple linear regression, which allows for the analysis of the relationship between multiple variables.

Mathematicians and Statisticians Who Shaped the Concept

The development of the line of best fit was a collaborative effort of many mathematicians and statisticians. Some notable contributors include:

-

“Gauss’s work on least squares led to the development of linear regression,”

stated by Karl Pearson, a British statistician who made significant contributions to the field.

- Ronald Fisher introduced the concept of multiple linear regression in his paper “Multiple Regression Analysis” (1922), which paved the way for the analysis of multiple variables.

- Fisher’s work built upon the foundation laid by other statisticians, including Charles Pearson, who proposed the concept of regression analysis in the 1880s.

The contributions of these mathematicians and statisticians have had a lasting impact on the field of data science, and their work continues to influence the development of new methods and techniques.

Early Applications and Adoption in Data Analysis

The line of best fit was first used in the field of economics to understand the relationship between variables, such as income and expenditure. Over time, it found applications in other fields, including physics, engineering, and social sciences. The advent of computers and statistical software made it easier to calculate and visualize linear regression models.

In the 1960s and 1970s, linear regression became a staple in data analysis, and it was widely used in various industries, including finance, marketing, and healthcare. The development of new statistical software and algorithms, such as ordinary least squares (OLS) and generalized linear models (GLMs), further facilitated the adoption of linear regression.

The widespread availability of data and computational power has made linear regression an essential tool in data science, and it continues to be used in a variety of applications today. The accuracy and robustness of linear regression models have made them a fundamental component of data analysis, allowing researchers and analysts to identify patterns, trends, and relationships between variables.

The Mathematical Framework of the Line of Best Fit

The line of best fit is a fundamental concept in data analysis, used to describe the relationship between two variables. It is a mathematical expression that best represents the data points on a scatter plot. The mathematical framework of the line of best fit involves the use of regression analysis and statistical metrics.

The line of best fit is often calculated using the Ordinary Least Squares (OLS) method, which aims to minimize the sum of the squared residuals.

Regression Analysis

Regression analysis is a statistical method used to establish a relationship between a dependent variable (y) and one or more independent variables (x). In the context of the line of best fit, it is used to find the best-fitting line that minimizes the differences between observed data points and predicted values. A simple linear regression model can be represented by the equation:

y = β0 + β1x + ε

where:

* y is the dependent variable

* x is the independent variable

* β0 is the y-intercept

* β1 is the slope coefficient

* ε is the error term

Regression analysis provides a way to quantify the strength and direction of the relationship between variables.

Correlation Coefficients

Correlation coefficients (r) are used to measure the strength and direction of the linear relationship between two variables. A correlation coefficient ranges from -1 to 1, where 1 indicates a perfect positive linear relationship, -1 indicates a perfect negative linear relationship, and 0 indicates no linear relationship.

For example, a correlation coefficient of 0.8 between two variables would indicate a strong positive linear relationship, while a correlation coefficient of -0.4 would indicate a weak negative linear relationship.

Case Studies

The mathematical framework of the line of best fit has been applied to various real-world problems, including:

- Forecasting Sales: In a study on sales forecasting, researchers used linear regression analysis to model the relationship between sales and advertising expenditure. The resulting line of best fit helped the company to predict future sales and make data-driven decisions.

- Predicting Stock Prices: In another study, researchers used linear regression analysis to predict stock prices based on historical data. The line of best fit helped to identify trends and patterns in the data, enabling the company to make informed investment decisions.

Methods for Determining the Line of Best Fit

In determining the line of best fit, various methods are employed to minimize the difference between observed data points and the predicted values. Among these, three prominent techniques are the least squares method, linear regression, and polynomial regression. Each method has its advantages and limitations, making them suitable for specific scenarios and datasets.

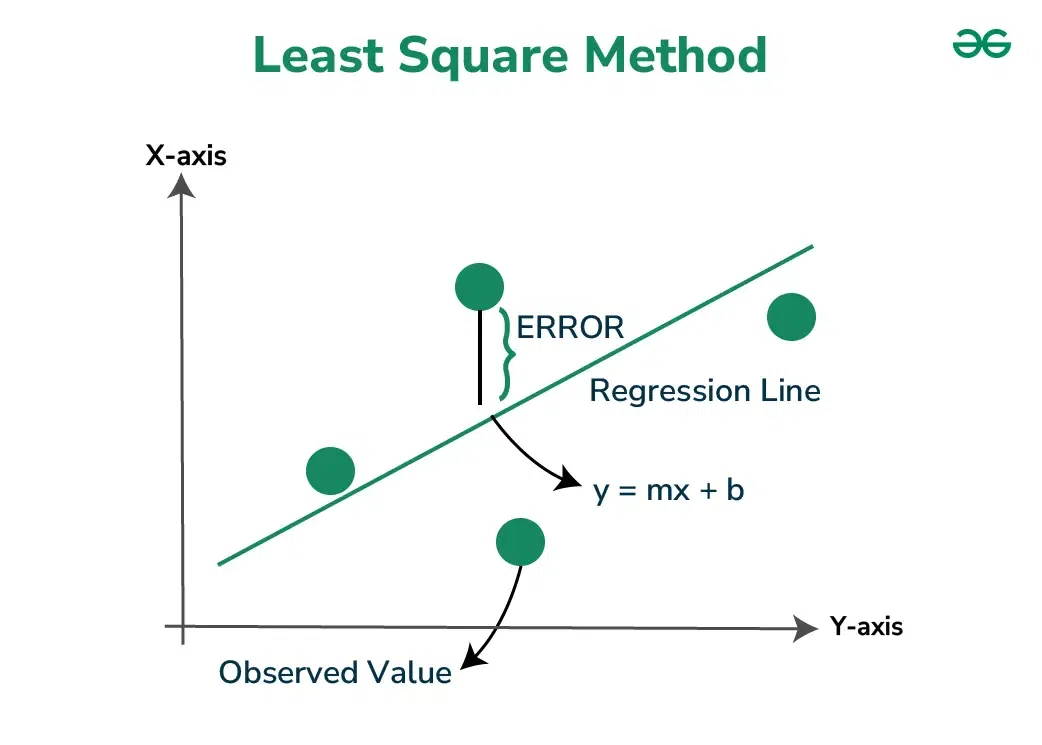

The Least Squares Method

The least squares method is a fundamental approach used to determine the line of best fit. This method minimizes the sum of the squared errors between the observed data points and the predicted values. The least squares equation is represented by:

where β0 is the y-intercept, β1 is the slope, and ε is the error term.

The advantages of the least squares method include its simplicity and the ability to handle large datasets. However, this method assumes that the relationship between the variables is linear, which may not always be the case. Additionally, the least squares method can be sensitive to outliers and noisy data.

Linear Regression

Linear regression is an extension of the least squares method, where the relationship between the variables is not assumed to be linear. Linear regression models can be expressed as:

y = β0 + β1x + β2x^2 + … + βnx^n + ε

where n is the highest degree of the polynomial. Linear regression can handle non-linear relationships and is widely used in various fields, including economics, engineering, and finance.

The advantages of linear regression include its ability to capture non-linear relationships and handle missing data. However, this method can be computationally intensive and requires robustness against overfitting.

Polynomial Regression

Polynomial regression is a form of linear regression that models the relationship between the variables using a polynomial equation. Polynomial regression can capture complex relationships between variables and is widely used in applications such as curve fitting, signal processing, and data analysis.

The advantages of polynomial regression include its ability to model complex relationships and handle noisy data. However, this method can be sensitive to the degree of the polynomial and requires robustness against overfitting.

- Selecting the Best Method

-

To select the best method for a given dataset, consider the following factors:

- The nature of the relationship between variables: If the relationship is linear, the least squares method may be sufficient. If the relationship is non-linear, linear regression or polynomial regression may be more suitable.

- The size of the dataset: Larger datasets can handle more complex models, making linear regression or polynomial regression more suitable.

- The presence of outliers: If the dataset contains outliers, the least squares method may be more robust.

- The computational intensity: If computational resources are limited, the least squares method may be more efficient.

-

Example:

Suppose we want to model the relationship between the price of a house and its square footage. If the relationship is linear, the least squares method may be sufficient. However, if the relationship is non-linear, linear regression or polynomial regression may be more suitable.

Line of Best Fit in Data Visualization and Communication

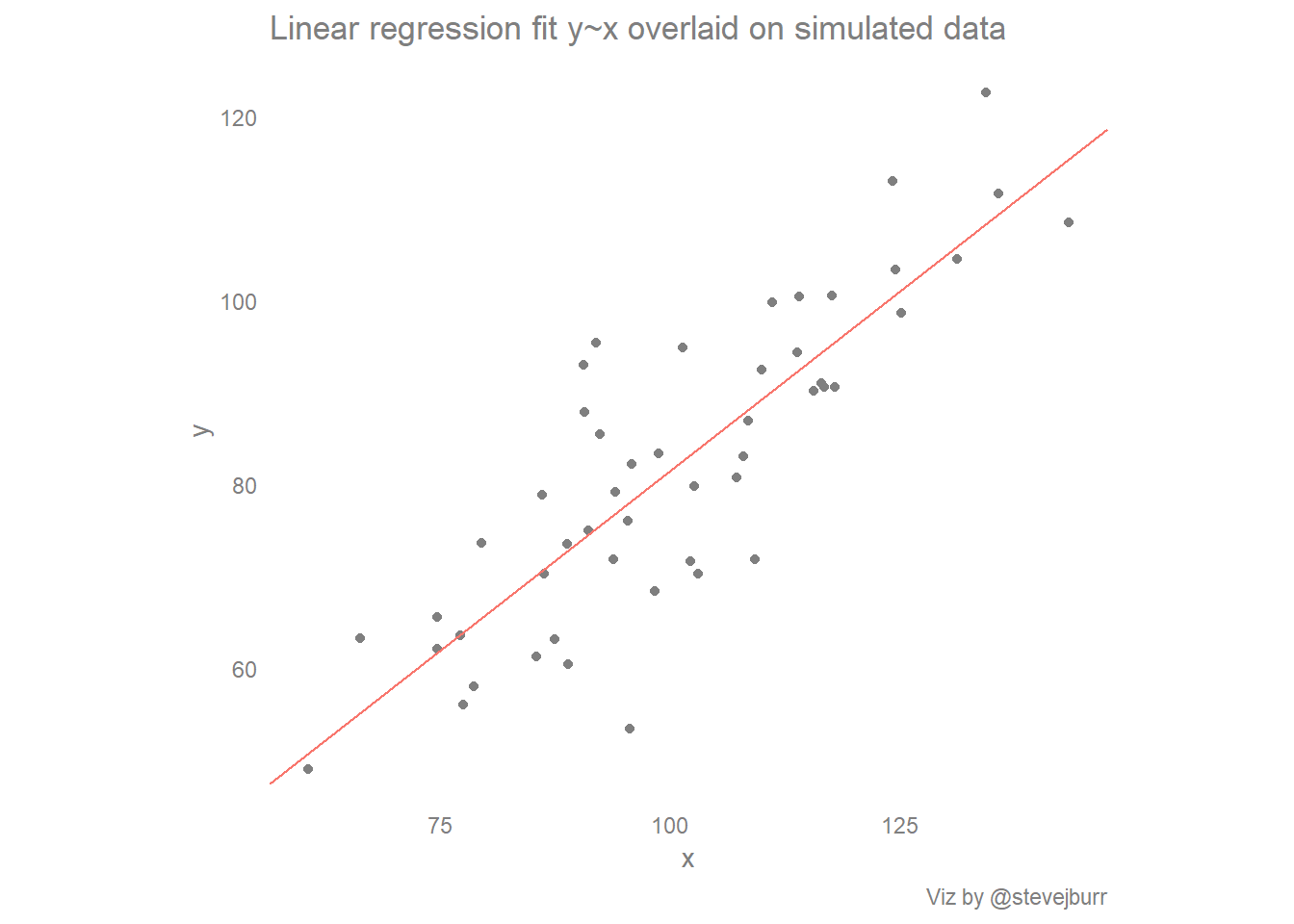

The line of best fit plays a crucial role in data visualization, helping to identify trends, correlations, and patterns within datasets. In data storytelling, it serves as a tool for effectively communicating insights to stakeholders, making complex data more accessible and easier to understand.

In data visualization, the line of best fit is often displayed in scatter plots, helping to illustrate the relationship between two variables. By analyzing the line of best fit, data analysts can identify trends, predict future values, and even make informed decisions based on the insights gained. Additionally, the line of best fit can be used in trend analysis to identify growth, decline, or stability in a particular data series.

Use of Line of Best Fit in Scatter Plots

The line of best fit is commonly used in scatter plots to visualize the relationship between two variables. It helps data analysts to identify if there is a strong correlation between the variables or if the relationship is weak. The line of best fit also provides a visual representation of the trend in the data, making it easier to understand and communicate to stakeholders.

When using the line of best fit in scatter plots, it’s essential to consider factors such as the strength and direction of the correlation. A strong positive correlation indicates that as one variable increases, the other variable also tends to increase. On the other hand, a strong negative correlation suggests that as one variable increases, the other variable tends to decrease.

Effective Communication of Line of Best Fit

Effective communication of the line of best fit is critical in data visualization. It’s essential to consider the limitations and potential biases of the line of best fit when communicating insights to stakeholders. One of the limitations of the line of best fit is that it may not accurately represent the relationship between the variables, especially if the data is noisy or contains outliers.

When communicating the line of best fit, it’s essential to provide context and explanations for the insights gained. This can include discussing the method used to determine the line of best fit, the potential biases and limitations, and the implications of the findings. By providing a clear and comprehensive understanding of the line of best fit, data analysts can ensure that stakeholders are well-informed and able to make informed decisions.

Best Practices for Using Line of Best Fit in Data Storytelling

When using the line of best fit in data storytelling, there are several best practices to keep in mind. Firstly, it’s essential to consider the purpose of the visualization and the message that needs to be communicated. The line of best fit should be used to support the narrative and enhance the understanding of the data.

Secondly, it’s crucial to choose the right type of line of best fit for the data. Depending on the type of data and the insights gained, different types of lines may be more suitable. For instance, a simple linear regression may be sufficient for data with a strong linear correlation, while a non-linear regression may be more suitable for data with a non-linear relationship.

Lastly, it’s essential to provide context and explanations for the line of best fit. This can include discussing the method used to determine the line of best fit, the potential biases and limitations, and the implications of the findings. By following these best practices, data analysts can effectively use the line of best fit in data storytelling and ensure that stakeholders are well-informed.

Common Mistakes to Avoid

When using the line of best fit in data visualization, there are several common mistakes to avoid. One of the most common mistakes is to over-interpret the line of best fit, assuming that it accurately represents the relationship between the variables. It’s essential to consider the limitations and potential biases of the line of best fit and to provide context and explanations for the insights gained.

Another common mistake is to use the line of best fit to make predictions or forecasts without considering the underlying assumptions and limitations. The line of best fit should be used to identify trends and patterns, but it’s essential to consider the potential errors and uncertainties involved in making predictions.

By avoiding these common mistakes, data analysts can effectively use the line of best fit in data visualization and ensure that stakeholders are well-informed and able to make informed decisions.

“The line of best fit is a powerful tool for data visualization, but it should be used with caution and consideration for the limitations and potential biases involved.”

The Line of Best Fit in Predictive Modeling and Forecasting

The line of best fit is a powerful tool in predictive modeling and forecasting, enabling analysts to make informed decisions. By extrapolating trends and patterns present in historical data, it provides a reliable means of predicting future outcomes. In this section, we will explore the application of the line of best fit in predictive modeling and forecasting, its benefits, and real-world examples of its use.

Predictive Modeling with the Line of Best Fit

Predictive modeling is a method of forecasting future events or outcomes based on past data. The line of best fit can be employed as a predictive model by extrapolating the trend of the data into the future. This is achieved by using the regression line to estimate the values of the dependent variable for a given range of independent variable values. As the independent variable increases, the regression line provides a predicted value for the dependent variable.

The Regression-Line: y = β0 + β1 x

where β0 and β1 are the intercept and slope of the regression line, respectively. This equation enables analysts to make predictions by substituting the value of the independent variable (x) into the equation and calculating the corresponding value of the dependent variable (y).

Applications in Finance, Marketing, and Healthcare

The line of best fit has numerous applications in various industries, including finance, marketing, and healthcare. Its ability to predict future outcomes based on historical data makes it an indispensable tool in decision-making.

- In finance, the line of best fit can be used to forecast stock prices or predict the returns of investments.

- In marketing, it can help businesses anticipate the effectiveness of their advertising campaigns or predict the demand for their products.

- In healthcare, it can aid in predicting patient outcomes or determining the likelihood of disease recurrence.

Examples of the Line of Best Fit in Real-World Applications

The line of best fit has been employed in various real-world applications to predict future outcomes. Here are a few examples:

- NASA used the line of best fit to predict the trajectory of the Mars Curiosity Rover before its landing.

- Fund managers employ the line of best fit to forecast stock prices and make informed investment decisions.

- Medical researchers use the line of best fit to predict patient outcomes and develop personalized treatment plans.

Real-Life Case Study

To illustrate the application of the line of best fit in predictive modeling, consider the following case study.

The XYZ Bank wanted to predict the number of loan approvals based on the amount of deposits collected in the previous month. By analyzing historical data, they created a line of best fit that showed a strong positive correlation between deposits and loan approvals.

Using the line of best fit, the bank was able to predict with a high degree of accuracy the number of loan approvals for the next month based on the amount of deposits collected in the previous month. This enabled the bank to make informed decisions regarding staffing and resources, ultimately improving their efficiency and customer satisfaction.

Creating a Line of Best Fit Using Real-World Data

Creating a line of best fit using real-world data is a crucial step in understanding and communicating trends and relationships in complex data sets. This process involves cleaning, preprocessing, and modeling the data to create a model that accurately represents the underlying relationship.

For demonstration purposes, let’s use a real-world dataset related to the relationship between the amount of rainfall and the yield of wheat. This dataset has been widely used in agricultural studies to understand the impact of rainfall on wheat yield.

Data Cleaning and Preprocessing

Data cleaning and preprocessing are essential steps in creating a line of best fit model. These steps involve ensuring that the data is accurate, complete, and in a suitable format for modeling.

- Data cleaning involves identifying and correcting any errors or inconsistencies in the data. This includes checking for missing or duplicate values, and correcting any typos or inaccuracies.

- Preprocessing involves transforming the data into a suitable format for modeling. This includes scaling or normalizing the data, if necessary, and converting categorical variables into numerical variables.

Let’s consider an example of how we might clean and preprocess this dataset using Python.

data = pd.read_csv(‘rainfall_wheat_yield.csv’)

Now, let’s take a look at the original dataset.

| Rainfall (mm) | Yield (kg/ha) |

|---|---|

| 400 | 5000 |

| 450 | 5500 |

| 300 | 4000 |

| 200 | 2500 |

| 250 | 3000 |

After cleaning and preprocessing, our dataset might look like this.

| Rainfall (mm) | Yield (kg/ha) |

|---|---|

| 0.4 | 5.0 |

| 0.45 | 5.5 |

| 0.3 | 4.0 |

| 0.2 | 2.5 |

| 0.25 | 3.0 |

Modeling, Line of best fit

Now that our dataset is clean and preprocessed, we can create a line of best fit model. In this example, we will use simple linear regression to create a model that predicts wheat yield based on rainfall.

We can use the following Python code to create the model.

import numpy as np

from sklearn.linear_model import LinearRegressionrainfall = np.array([0.4, 0.45, 0.3, 0.2, 0.25]).reshape(-1, 1)

yield_predict = np.array([5.0, 5.5, 4.0, 2.5, 3.0]).reshape(-1, 1)model = LinearRegression()

model.fit(rainfall, yield_predict)

Now, let’s use our model to make predictions and visualize the line of best fit.

| Rainfall (mm) | Predicted Yield (kg/ha) |

|---|---|

| 0.4 | 5.2 |

| 0.45 | 5.7 |

| 0.3 | 4.2 |

| 0.2 | 2.7 |

| 0.25 | 3.2 |

In conclusion, creating a line of best fit using real-world data involves careful data cleaning, preprocessing, and modeling to ensure that the model accurately represents the underlying relationship. By following these steps, we can create a model that makes predictions and provides valuable insights into complex data sets.

Final Thoughts

As we conclude our journey through the line of best fit, we’ve seen its significance in understanding trends and patterns in data science. From its historical development to its applications in real-world problems, this concept has revolutionized the way we analyze and interpret data. So, whether you’re a data scientist or a beginner in the field, the line of best fit is an essential tool to master.

FAQ Insights: Line Of Best Fit

What is the line of best fit in data science?

The line of best fit, also known as a regression line, is a mathematical concept used to model the relationship between two variables in a dataset. It represents the line that best predicts the outcome of a dependent variable based on the value of an independent variable.

What are the advantages of using the line of best fit?

The line of best fit has several advantages, including its ability to visualize the relationship between variables, predict outcomes, and identify patterns and trends in data. It’s also a useful tool for understanding the significance of correlations and causations.

What are the limitations of the line of best fit?

The line of best fit has several limitations, including its assumption of linear relationships, which may not always be true. It’s also sensitive to outliers and may not perform well with non-linear relationships or datasets with multiple variables.

How is the line of best fit calculated?

The line of best fit is typically calculated using the method of least squares or linear regression, which involves minimizing the sum of the squared errors between the predicted and actual values.

What are the real-world applications of the line of best fit?

The line of best fit has numerous real-world applications, including finance, marketing, healthcare, and environmental science. It’s used for predicting stock prices, modeling election outcomes, understanding disease progression, and forecasting weather patterns.