Kicking off with which regression equation best fits these data, this opening chapter is designed to help you navigate the complex world of regression modeling. With its rich applications in data analysis and statistical modeling, regression analysis has become a fundamental tool for businesses, researchers, and scientists alike. From predicting stock prices to forecasting the success of new products, regression analysis plays a vital role in making informed decisions.

But before you can make sense of the data, you need to choose the right regression equation. With so many options available – linear, logistic, polynomial, and more – it’s easy to get overwhelmed. That’s where this article comes in, breaking down the differences between these equations, highlighting their strengths and limitations, and providing practical examples to guide you through the process.

Types of Regression Equations

In statistics, regression equations are crucial tools used to model relationships between variables, estimate the value of one variable based on the others, and make predictions. There are several types of regression equations, each suited for different situations and datasets. In this discussion, we’ll delve into the differences between linear, logistic, and polynomial regression equations, exploring their applications and examples.

Differences Between Linear, Logistic, and Polynomial Regression Equations

The primary distinction between these regression equations lies in the types of relationships they model and the outcome variables. Linear regression equations assume a linear relationship between the variables, while logistic regression equations model binary outcomes, and polynomial regression equations involve nonlinear relationships.

Linear Regression Equations

Linear regression equations are the most common type of regression equation. They assume a linear relationship between the independent variable(s) and the dependent variable. The equation is typically in the form

y = β0 + β1x + ε

, where y is the dependent variable, x is the independent variable, β0 and β1 are coefficients, and ε is the error term. Linear regression equations are used in a wide range of applications, such as predicting house prices based on characteristics like size, number of bedrooms, and location.

- Regression Analysis with Multiple Independent Variables: Linear regression can be extended to include multiple independent variables. This is particularly useful when analyzing complex relationships between variables.

- Regression Coefficients: In linear regression, the coefficients of the independent variables represent the change in the dependent variable. This is a valuable tool for understanding the relationships between variables.

- Model Assumptions: Linear regression relies on certain assumptions, including linearity, homoscedasticity, and normality. These assumptions are crucial for ensuring the accuracy and reliability of the model.

Logistic regression equations are used to model binary outcomes. They assume a nonlinear relationship between the independent variable(s) and the dependent variable. The equation is typically in the form

y = 1 / (1 + e^(-β0-β1x))

, where y is the dependent variable, x is the independent variable, β0 and β1 are coefficients, and e is the base of the natural logarithm. Logistic regression equations are used in applications like predicting credit scores, predicting disease outcomes, and modeling marketing response.

- Binary Outcome Modeling: Logistic regression is specifically designed for modeling binary outcomes. This makes it an ideal tool for analyzing binary responses.

- Interpretation of Coefficients: The coefficients in logistic regression represent the change in the log-odds of the dependent variable. This requires careful interpretation to understand the relationships between variables.

- Model Fit: Logistic regression model fit is typically evaluated using metrics like accuracy, sensitivity, and specificity.

Polynomial Regression Equations

Polynomial regression equations involve nonlinear relationships between independent and dependent variables. They are typically used to fit complex data or when the relationship between the variables is not linear. The equation is typically in the form

y = β0 + β1x + β2x^2 + ε

, where y is the dependent variable, x is the independent variable, β0, β1, and β2 are coefficients, and ε is the error term. Polynomial regression equations are used in applications like modeling the relationship between variables in complex systems, analyzing economic data, and predicting outcomes in competitive sports.

- Nonlinear Relationship Modeling: Polynomial regression is designed to model nonlinear relationships between variables.

- Predictive Power: Polynomial regression can provide a good fit to the data, especially when the relationship between the variables is complex.

- Interpretation of Coefficients: The coefficients in polynomial regression represent the change in the dependent variable. However, interpretation requires careful consideration of the polynomial form of the equation.

Methods for Evaluating the Fit of Regression Equations

In order to determine whether a regression equation adequately describes the relationship between variables, it is essential to assess its goodness-of-fit. Several tools and techniques can be employed for this purpose. This section discusses the use of residual plots, normality tests, and other diagnostic tools to evaluate the fit of a regression equation.

Residual Plots

Residual plots are a crucial diagnostic tool for evaluating the fit of a regression equation. These plots show the relationship between the observed and predicted values based on the model. By examining residual plots, researchers can identify potential issues with the model, such as non-linearity, outliers, or non-random errors.

- Scatter plots of residuals versus predicted values can help identify non-linearity or issues with the model’s assumptions.

- Residual plots against fitted values can indicate non-random errors or model misspecification.

- A normal probability plot of the residuals can be used to check for normality of the residuals.

Normality Tests

Normality tests, such as the Shapiro-Wilk test or the Anderson-Darling test, are used to determine whether the residuals follow a normal distribution. This is essential since many statistical tests assume normally distributed residuals.

- A non-normal distribution of residuals can lead to incorrect statistical inferences or inflated Type I error rates.

- Transforming the data to achieve normality can be a solution, but this may impact the model’s interpretability.

Other Diagnostic Tools

Several other diagnostic tools can be used to evaluate the fit of a regression equation, including:

- Histograms of the residuals to check for symmetry and peakedness.

- Box plots of the residuals to identify outliers.

- Correlation between the residuals and the independent variables to check for multicollinearity.

Interpreting Diagnostic Results

When interpreting diagnostic results, it is essential to understand the implications of each test or plot. For instance, a non-normal distribution of residuals may indicate that the model’s assumptions are not met, while a significant correlation between residuals and independent variables may suggest multicollinearity.

-

Failure to satisfy model assumptions, such as normality, can lead to incorrect statistical inferences.

Regression equations can be affected by various issues that reduce their accuracy and reliability. These common issues can arise due to the inherent characteristics of the data or the model itself. In this section, we will discuss three key issues that can impact the accuracy of regression equations: multicollinearity, heteroscedasticity, and non-normality.

Multicollinearity

Multicollinearity occurs when two or more independent variables are highly correlated with each other, resulting in unstable and unreliable estimates of the coefficients. This issue can arise due to various reasons, such as:

- Including too many independent variables in the model, which can lead to redundant information.

- Using variables that are highly correlated with each other, such as income and wealth.

- Not checking for multicollinearity before running the regression analysis.

To address multicollinearity, researchers can use various techniques, such as:

- Removing redundant variables from the model.

- Using dimensionality reduction techniques, such as principal component analysis (PCA) or factor analysis.

- Using regularized regression models, such as Lasso or Ridge regression, to penalize the coefficients of highly correlated variables.

Heteroscedasticity

Heteroscedasticity occurs when the variance of the residuals changes across different levels of the independent variables. This issue can arise due to various reasons, such as:

- Non-normal distribution of the residuals.

- Outliers or influential observations in the data.

- Correlation between the independent variables and the residuals.

To address heteroscedasticity, researchers can use various techniques, such as:

- Transforming the data to stabilize the variance.

- Using weighted least squares (WLS) regression, which assigns different weights to different observations based on their variance.

- Using robust standard errors, which are more resistant to outliers and influential observations.

Non-normality

Non-normality occurs when the residuals do not follow a normal distribution, which is a key assumption of linear regression. This issue can arise due to various reasons, such as:

- Non-linear relationships between the independent and dependent variables.

- Outliers or influential observations in the data.

- Skewed or heavy-tailed distributions of the residuals.

To address non-normality, researchers can use various techniques, such as:

- Transforming the data to normality, such as using logarithmic or square root transformations.

- Using non-parametric tests, such as the Wilcoxon rank-sum test, which do not assume normality.

- Using robust statistical methods, such as the Huber-White standard error estimator, which are less sensitive to non-normality.

Best Practices for Selecting and Implementing Regression Equations

Selecting and implementing regression equations is a critical step in data analysis, with a direct impact on the accuracy and reliability of conclusions derived from the data. To ensure that regression analysis is conducted effectively, it is essential to adhere to best practices that encompass data quality, sample size, and variable selection.

The quality of data used in regression analysis has a profound effect on the outcomes. Poor data quality, characterized by missing values, outliers, or measurement errors, can lead to biased or unstable regression coefficients, resulting in erroneous conclusions. Hence, it is crucial to scrutinize data for quality control before proceeding with regression analysis. This comprises cleaning and preprocessing data to ensure accuracy, validity, and reliability.

Data Quality Control

To ensure data quality, perform the following steps:

- Inspect data for missing values and outliers and handle them appropriately.

- Verify the accuracy of data by cross-checking against original sources.

- Correct errors if any are found in data, such as typos or incorrect units.

- Transform data if necessary, to meet assumptions of regression analysis.

Additionally, a large and representative sample size is vital for reliable regression results. A small sample size can lead to poor model fit, biased estimates, and lack of generalizability to the population. Therefore, when determining sample size, consider factors such as the desired level of precision, the expected variance of the response variable, and the number of predictor variables.

Sample Size and Representation

To ensure representative sampling, consider:

- The desired precision of estimates and the corresponding sample size requirements.

- The number of independent variables and their inter-correlations, as this may impact estimation variance.

- Stratification or weighting procedures to account for underrepresented groups.

Variable selection is another critical component of regression analysis. The inclusion of irrelevant or redundant variables can negatively impact model fit, estimation accuracy, and interpretability. Therefore, it is essential to carefully select variables based on theoretical knowledge, empirical evidence, or data exploration techniques.

Variable Selection

To ensure optimal variable selection, follow these guidelines:

- Employ domain knowledge and theoretical understanding to identify relevant variables.

- Conduct data exploration techniques, such as dimensionality reduction and correlation analysis, to identify potential variables.

- Use model selection criteria, such as Akaike information criterion (AIC) or Bayesian information criterion (BIC), to evaluate competing models.

By adhering to these best practices, you can ensure that your regression analysis is conducted in a manner that yields reliable and accurate results, and that the conclusions derived from the analysis are trustworthy and actionable.

Furthermore, to avoid common mistakes when selecting and implementing regression equations, be aware of pitfalls such as:

- Data snooping or overfitting, where multiple models are fitted and evaluated on the same dataset.

- Selection bias, where the sample selected is not representative of the target population.

- Failing to account for multicollinearity, where highly correlated predictor variables can lead to unstable estimates.

By being aware of these potential pitfalls, you can take steps to prevent them and ensure that your regression analysis is conducted in a way that is transparent, reproducible, and robust.

“Regression analysis is a powerful tool for modeling complex relationships, but it is not a panacea. It requires careful consideration of data quality, sample size, and variable selection to yield reliable and accurate results.”

By following these best practices and avoiding common mistakes, you can ensure that your regression analysis is conducted in a manner that is credible, reliable, and actionable.

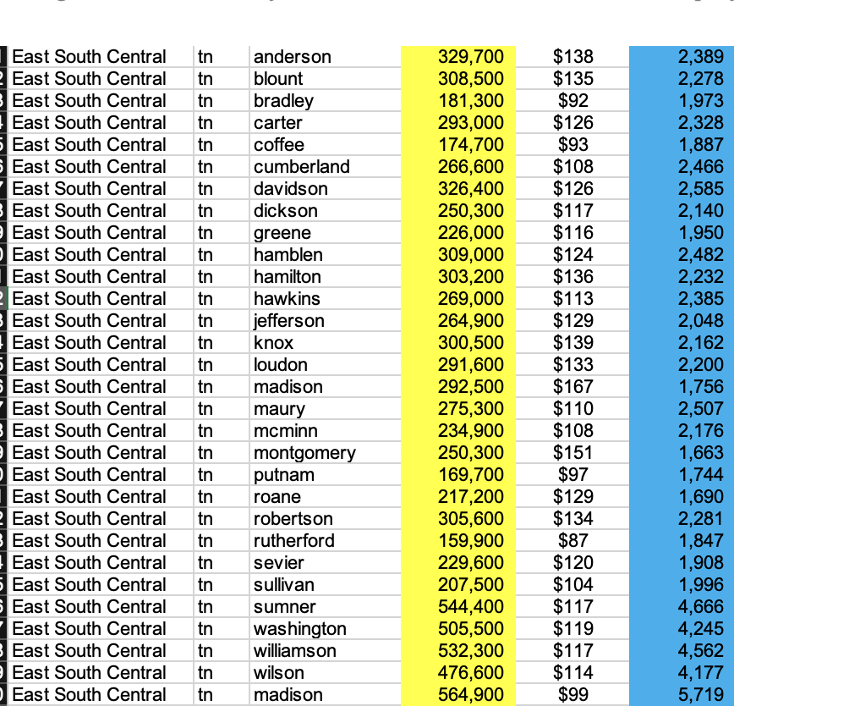

Organizing Data for Regression Analysis

Organizing data for regression analysis is a crucial step in identifying the relationships between variables. Accurate and thorough data organization enables statisticians to select the most appropriate regression model and ensure the accuracy of results.

Regression analysis relies heavily on well-structured and complete data. Proper data organization is essential in this context, as it directly affects the validity of conclusions drawn from the analysis. A well-organized dataset minimizes the risk of errors and ensures data consistency.

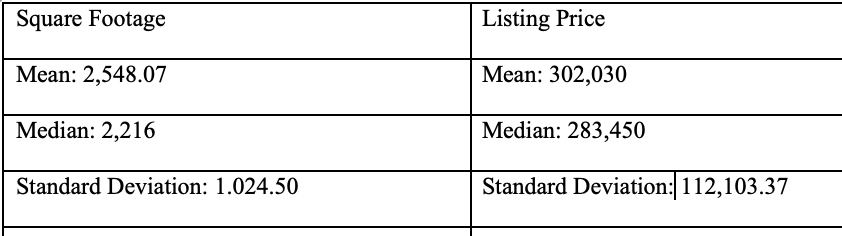

Data Characteristics and Features Required for Regression Analysis

To accurately interpret regression results, it is crucial to understand the characteristics and features of the data being analyzed. These characteristics include:

Data Types

Data types are categorized into numerical, categorical, and time-series. Understanding the data types helps in selecting the correct regression model.

Observations

It includes the independent variable (predictor), the dependent variable (response), and any potential confounding variables. The number of observations should be sufficient for the chosen regression model.

Missing Values

Handling missing values is essential in regression analysis. Ignoring them can lead to biased results, while imputing them requires careful consideration to avoid introducing additional bias.

Suspect or Outlier Data

Inconsistencies or outliers can significantly affect the analysis. It’s vital to identify and handle them accordingly to maintain the model’s accuracy.

| Column 1 | Column 2 | Column 3 | Column 4 |

|---|---|---|---|

| Data Variable (Independent) | Dependent Variable (Response) | Predictor Variables | Constant (or Intercept) |

| Observations (Number of Samples) | Data Range (Range of Values) | Confounding Variables | Missing Value Code (NaN or Other Values) |

Data Quality and Preprocessing

Data quality checks involve verifying the accuracy, completeness, and consistency of the data. Preprocessing involves transforming and preparing the data for analysis, including handling missing values and outliers.

Data quality and preprocessing are critical steps in ensuring that regression results are accurate and reliable.

Example Dataset

The following example dataset illustrates the data characteristics and features required for regression analysis.

| ID | Age (Years) | Salary (Dollars) | Location | Time Worked (Years) |

|—-|————-|——————-|————–|——————–|

| 1 | 25 | 50000 | Urban | 5 |

| 2 | 30 | 70000 | Rural | 10 |

| 3 | 28 | 60000 | Urban | 7 |

| …| … | … | … | … |

Identifying the Most Suitable Regression Equation for Complex Data

When dealing with complex data, it’s essential to identify the most suitable regression equation to accurately model the relationships between variables. This involves comparing the performance of different regression equations on a dataset with multiple independent variables and interaction terms.

In such a scenario, the choice of regression equation can be overwhelming due to the numerous advanced models available. However, the key is to understand the characteristics of the data and select a model that can effectively capture the complex relationships.

Selecting the Right Regression Equation for Complex Data, Which regression equation best fits these data

To identify the most suitable regression equation, consider the following factors:

- Multiple independent variables: When there are multiple independent variables, consider using regression equations that can handle multiple interactions, such as polynomial regression or generalized additive models.

- Non-linear relationships: If the data exhibits non-linear relationships, consider using non-linear regression equations, such as spline regression or logistic regression.

- Interactions between variables: If there are interactions between variables, consider using regression equations that can handle these interactions, such as interaction term regression or generalized linear mixed models.

- Complex relationships: If the data exhibits complex relationships, consider using advanced regression models, such as neural networks or Bayesian regression.

When selecting the right regression equation, it’s crucial to consider the research question, data characteristics, and the level of complexity that the model can handle. By doing so, researchers can ensure that the chosen regression equation accurately models the relationships in the data.

Risks and Benefits of Using Advanced Regression Models

Using advanced regression models can provide several benefits, including:

- Improved accuracy: Advanced regression models can capture complex relationships and provide more accurate predictions.

- Flexibility: Advanced regression models can handle multiple interactions and non-linear relationships, making them ideal for complex data.

- Interpretability: Advanced regression models can provide insights into the relationships between variables and the impact of individual variables on the response variable.

However, advanced regression models also come with several risks, including:

- Overfitting: Advanced regression models can overfit the data, leading to poor performance on new, unseen data.

- Complexity: Advanced regression models can be complex and difficult to interpret, making it challenging to communicate results to non-technical stakeholders.

- Computational demands: Advanced regression models can require significant computational resources, making them challenging to implement in resource-constrained environments.

When using advanced regression models, it’s essential to carefully evaluate the benefits and risks and to consider the research question, data characteristics, and the level of complexity that the model can handle. By doing so, researchers can ensure that the chosen regression equation accurately models the relationships in the data and provides valuable insights into the variables of interest.

Evaluating the Performance of Regression Equations

When evaluating the performance of regression equations, consider the following metrics:

- R-squared: R-squared measures the proportion of variance in the response variable that is explained by the independent variables.

- Mean squared error: Mean squared error measures the average difference between predicted and actual values.

- Mean absolute error: Mean absolute error measures the average absolute difference between predicted and actual values.

These metrics provide a comprehensive understanding of the regression equation’s performance and can be used to compare the performance of different regression equations.

Best Practices for Selecting and Implementing Regression Equations

When selecting and implementing regression equations, follow these best practices:

- Understand the research question and data characteristics.

- Choose a regression equation that is suitable for the data and research question.

- Consider the level of complexity that the model can handle.

- Evaluate the performance of the regression equation using relevant metrics.

- Interpret the results and communicate them effectively to non-technical stakeholders.

By following these best practices, researchers can ensure that the chosen regression equation accurately models the relationships in the data and provides valuable insights into the variables of interest.

“The choice of regression equation is not just a matter of selecting a model; it’s about understanding the data, research question, and level of complexity that the model can handle.”

Interpreting and Visualizing Regression Results: Which Regression Equation Best Fits These Data

Interpreting and visualizing regression results is a crucial step in understanding the relationships between variables and making informed decisions. A well-structured visualization of regression output can facilitate effective communication of findings to stakeholders and enhance the credibility of the research. In this section, we will explore the process of generating graphs and tables to display regression output and provide guidelines for selecting the most informative visualizations.

Generating Graphs and Tables

To effectively interpret and communicate regression results, it is essential to generate intuitive and informative graphs and tables. These visual aids can help to highlight key findings, identify relationships between variables, and facilitate the discovery of trends and patterns. Here are some key elements to consider when generating graphs and tables:

- Scatter Plots: These plots are used to visualize the relationship between two continuous variables. By examining the scatter plot, you can determine the direction and strength of the relationship between the variables.

- Residual Plots: These plots are used to evaluate the assumptions of linear regression, such as normality and equal variance. A well-behaved residual plot should show a random scatter of points around the horizontal axis.

- Partial Regression Plots: These plots are used to examine the relationship between a dependent variable and a single independent variable, while controlling for other variables in the model.

These plots can be generated using statistical software packages, such as R or Python, or through specialized software tools, such as Tableau or Power BI.

Guidelines for Selecting the Most Informative Visualizations

When selecting visualizations to present regression results to stakeholders, it is essential to consider the audience and the goals of the presentation. Here are some guidelines to follow:

Consider the Audience

When selecting visualizations, consider the audience and their level of statistical expertise. Simple graphs and tables may be more effective for non-technical audiences, while more complex visualizations may be more suitable for technical audiences.

Focus on Key Findings

Ensure that the visualizations focus on key findings and are not too detailed or cluttered. This will help to prevent overwhelming the audience and facilitate effective communication of the results.

Use Intuitive Visualizations

Use visualizations that are intuitive and easy to understand. Avoid using 3D plots or other complex visualizations that may be difficult for the audience to interpret.

Consider Multiple Views

Consider presenting different views of the data to facilitate a more comprehensive understanding of the results. For example, presenting both the full dataset and a subset of the data may help to highlight specific patterns or trends.

By following these guidelines, you can effectively generate and present visualizations of regression results that facilitate effective communication and enhance the credibility of the research.

“The most effective visualizations are those that are simple, clear, and communicate a clear message.

Last Word

So, which regression equation best fits these data? By now, you should have a better understanding of the various options available and how to choose the most suitable one for your research question and data type. Remember, the key to successful regression analysis lies in understanding your data, selecting the right equation, and interpreting the results effectively. With these skills in hand, you’ll be well on your way to making data-driven decisions and unlocking the full potential of regression analysis.

Thanks for joining me on this journey through the world of regression equations. Whether you’re a seasoned statistician or just starting out, I hope you found this article informative and engaging. If you have any further questions or topics you’d like to explore, feel free to leave a comment below.

FAQ Overview

What is the main difference between linear and logistic regression equations?

Linear regression equations model continuous outcomes, while logistic regression equations model binary outcomes.

How do I choose the right regression equation for my research question?

Consider the research question, data type, and study goals. Linear regression is suitable for continuous outcomes, while logistic regression is suitable for binary outcomes.

What are some common issues that can affect the accuracy of regression equations?

Some common issues include multicollinearity, heteroscedasticity, and non-normality. Strategies for addressing these issues include data transformation, variable selection, and diagnostic checks.